Coordination intelligence for multi-agent AI.

Stop AI agents from burning money on silent failures. Real-time detection across CrewAI, LangGraph, OMA, or any framework via Python.

# LangGraph example. Two lines, detection runs automatically. from agentsonar import monitor graph = monitor(graph)

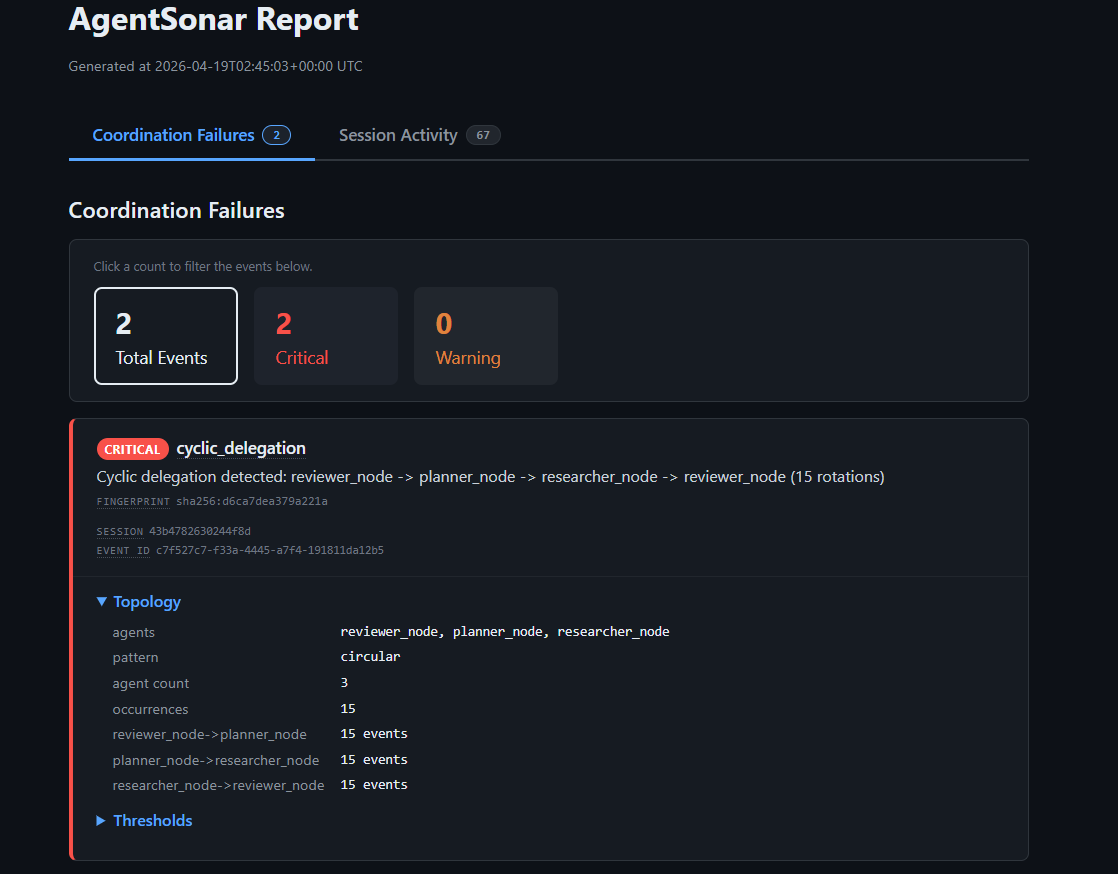

[SONAR 2026-04-19T02:45:00.012Z] session started (id: 43b4782630244f8d) [SONAR 2026-04-19T02:45:01.117Z] delegation: reviewer_node -> planner_node (#1) [SONAR 2026-04-19T02:45:01.842Z] delegation: planner_node -> researcher_node (#2) [SONAR 2026-04-19T02:45:02.501Z] delegation: researcher_node -> reviewer_node (#3) [SONAR 2026-04-19T02:45:02.503Z] cycle found: [reviewer_node -> planner_node -> researcher_node -> reviewer_node] 1 rotations [SONAR 2026-04-19T02:45:08.917Z] ⚠ WARNING cycle: [reviewer_node -> planner_node -> researcher_node -> reviewer_node] 5 rotations [SONAR 2026-04-19T02:45:18.412Z] 🚨 CRITICAL cycle: [reviewer_node -> planner_node -> researcher_node -> reviewer_node] 15 rotations

Your agents are going to misbehave.

When agents get stuck in infinite loops, spam each other with redundant delegations, or blow through a rate limit, standard tracing tools show you a timeline after the fact. AgentSonar surfaces the failure as it's happening.

Agents stuck in a loop

Two or more of your AI agents end up talking in circles. One keeps asking for changes, the next one keeps making them. By morning, $50 in API calls and the task never finished.

Same task assigned 15 times

An agent gives the same task to another agent 15 times before realizing it's already been done. Not a bug. Just $30 of API calls on duplicate work.

One agent doing too much, too fast

An agent kicks off way more work than expected. It calls tools in tight loops, creates dozens of sub-agents, or blasts through your API rate limit. Drains your wallet before anyone notices.

Three failure classes ship today. Cascade failures, deadlocks, and more are on the roadmap. Hit a coordination failure we don't catch yet? Request a detector.

Built for you if

- You're shipping multi-agent products on CrewAI, LangGraph, OMA, or your own Python orchestrator, and they're hitting real users.

- You've been surprised by an OpenAI or Anthropic bill, or you're worried you will be.

- Your team can't tell loops from legit retries when something's stuck overnight, and you'd like to.

What you actually get.

Three detection layers, four output files per run, zero LLM-as-judge. Everything is computed deterministically over the agent graph.

- Real-time alerts stream to stderr the moment a coordination failure is detected.

- Live JSONL timeline. Every event, flushed on write.

tail -fwhile your crew runs. - Standalone HTML report on shutdown. No external CSS, no JavaScript, no network requests. Email it, attach it to a ticket.

- Stable coordination fingerprints across runs. Correlate today's failure with yesterday's.

Two-line integration. Any framework.

Same detection engine, same output. Pick the adapter that matches your stack.

CrewAI extras: crewai

from agentsonar import AgentSonarListener sonar = AgentSonarListener()

LangGraph extras: langgraph

from agentsonar import monitor graph = monitor(graph)

Open Multi-Agent (OMA) @agentsonar/oma

import { emitDelegations } from "@agentsonar/oma" await emitDelegations(tasks)

Don't see your framework?

Plug in directly with our generic Python adapter. Works with any orchestrator, hand-rolled loop, or framework we haven't built a first-class adapter for yet:

from agentsonar import monitor_orchestrator sonar = monitor_orchestrator() sonar.delegation("planner", "researcher")

Or email us and we'll add native support for your framework.

Stop the bleeding. Prevent Mode. Shipping this week

Detection alone tells you what happened. Prevent Mode opt-in raises an exception the moment a tracked failure crosses the trip threshold, letting your code stop a runaway loop before the next LLM call.

from agentsonar import monitor_orchestrator, PreventError sonar = monitor_orchestrator(config={ "prevent": {"cyclic_delegation": True} }) try: while True: sonar.delegation("reviewer", "generator") # ... your agents run ... except PreventError as e: print(f"Stopped: {e.reason}") print(f"Cycle: {' -> '.join(e.cycle_path)}")

Available in the Custom Python, LangGraph, and OMA (TypeScript) adapters today. Off by default. Opt in with one config key.

What's next.

AgentSonar today ships Detection. The substrate it runs on (a coordination graph with stable fingerprints and framework-agnostic adapters) was built so the same primitive can power a handful of adjacent products. The sequencing:

- ~2–3 weeks OpenAI Agents SDK + Claude Agent SDK adapters Same one-import integration you already get on CrewAI and LangGraph, for the two SDKs most teams are building agents on right now.

- ~6 weeks Cost attribution Token cost per agent and per delegation edge. Answers "which coordination pattern burned the most this week?"

Frequently asked questions

Common questions about detecting and preventing multi-agent coordination failures in CrewAI, LangGraph, OMA, and other Python agent frameworks.

How do I detect when my AI agents are stuck in an infinite loop?

AI agents in multi-agent frameworks like CrewAI and LangGraph can get stuck in cyclic delegation, where two or more agents keep handing work back and forth without making progress. Standard tracing tools show you the timeline after the fact, when the bill arrives. AgentSonar detects the loop in real time, the moment the cycle forms. You can also opt into Prevent Mode to automatically stop the loop before it racks up token costs.

Why are my CrewAI agents looping forever?

In CrewAI's hierarchical mode, a manager agent that's never satisfied

with a worker's output can keep reassigning the same task indefinitely.

This often happens when the agent's prompt is ambiguous or the success

criteria isn't well defined. AgentSonar catches this pattern, called

repetitive_delegation, when the same agent-to-agent edge

fires more than 5 times in a window.

How can I stop my LangGraph agents from running forever?

LangGraph's recursion_limit catches infinite loops, but

only after they've already happened, usually after 25 or more iterations.

AgentSonar catches the cycle on rotation 5 by default (configurable),

giving you both real-time alerts and an opt-in

PreventError that stops the graph before the next LLM call.

How much do runaway AI agents cost?

A reviewer-writer loop running overnight can burn $50 to $500 in API

calls. One

public incident reported a $47,000 bill caused by two agents stuck

in an infinite conversation loop for 11 days, with weekly costs climbing

from $127 to $18,400 before anyone noticed. The exact pattern AgentSonar's

cyclic_delegation detector catches in real time, on rotation 5

by default.

What is multi-agent coordination failure?

When AI agents work together, they can fail in ways that don't produce errors or stack traces. Three common patterns: cyclic delegation (loops), repetitive delegation (the same task assigned repeatedly), and resource exhaustion (one agent kicks off too much work too fast). These silent failures don't crash anything, they just burn tokens. AgentSonar specializes in detecting them across CrewAI, LangGraph, OMA, and any Python agent framework.

Does AgentSonar work with the OpenAI Agents SDK?

Native OpenAI Agents SDK and Claude Agent SDK adapters are shipping in

2 to 3 weeks. For now, the generic Python adapter

(monitor_orchestrator) works with any agent framework,

including the OpenAI Agents SDK, by accepting explicit delegation events.

Two lines of code to wire it up.

How is AgentSonar different from Helicone or Langfuse?

Helicone and Langfuse are LLM observability tools that track per-call token cost, latency, and prompt history. AgentSonar focuses on coordination intelligence: detecting when agents fail to coordinate (loops, repetition, runaway delegation). Use them together. Helicone or Langfuse for per-call observability, AgentSonar for cross-agent coordination failures.

Get started.

Apache 2.0. Closed beta, expanding across CrewAI, LangGraph, and Open Multi-Agent.

Free during closed beta. Usage-based pricing at GA.

pip install agentsonar # any framework pip install agentsonar[crewai] # for CrewAI pip install agentsonar[langgraph] # for LangGraph npm install @agentsonar/oma # for Open Multi-Agent